Today, Samsung has announced that it has begun mass-producing its HBM2 (which stands for second generation High Bandwidth Memory interface) DRAM chips – a memory technology that the company claims is going to offer speeds “more than seven times faster than the current DRAM performance limit”.

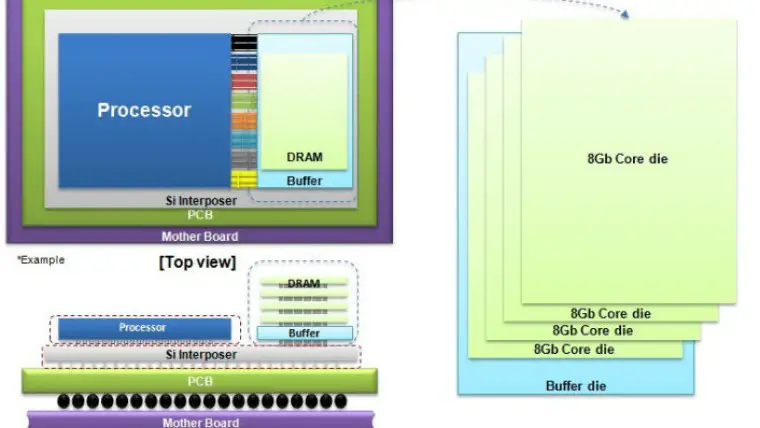

The company is currently manufacturing a 4GB package using a 20-nanometer process technology, and what makes it so special is the fact that it can deliver 256GBps of bandwidth (or roughly double that of the HBM1 used in AMD’s R9 Nano or R9 Fury X) in a tiny form factor, thanks to clever stacking of a buffer die and four 8Gb core dies, connected using a very dense array of TSVs.

If that sounds complicated, an easier way to think of it is that the 4GB HBM2 package can theoretically offer 7 times as much bandwidth as the 4Gb GDDR5 chips that can be found in Nvidia’s GTX 970 and GTX 980 graphics cards.

Samsung’s HBM2 DRAM is very fast and small, enabling Nvidia and AMD to cram more VRAM into future video cards that can drive 4K monitors, but it is also built for high reliability, as it has ECC (error-correcting code) functionality built-in. That makes it great for its use in high performance computing, whether it is parallel computing or machine learning.

The South Korean tech giant will slowly ramp up production volume, and is planning to release an 8GB HBM2 package later this year. As for when you’ll be enjoying this memory technology in actual products, both Nvidia and AMD are working on integrating the technology with their Pascal and Polaris architectures, respectively (at least for the high end segments).

HBM2 DRAM can also have an impact on future SoCs, as well as the APUs that will be used in the next generation of consoles, such as the Xbox One and PS4, which sometimes struggle to offer acceptable performance with current gen games such as Fallout 4.